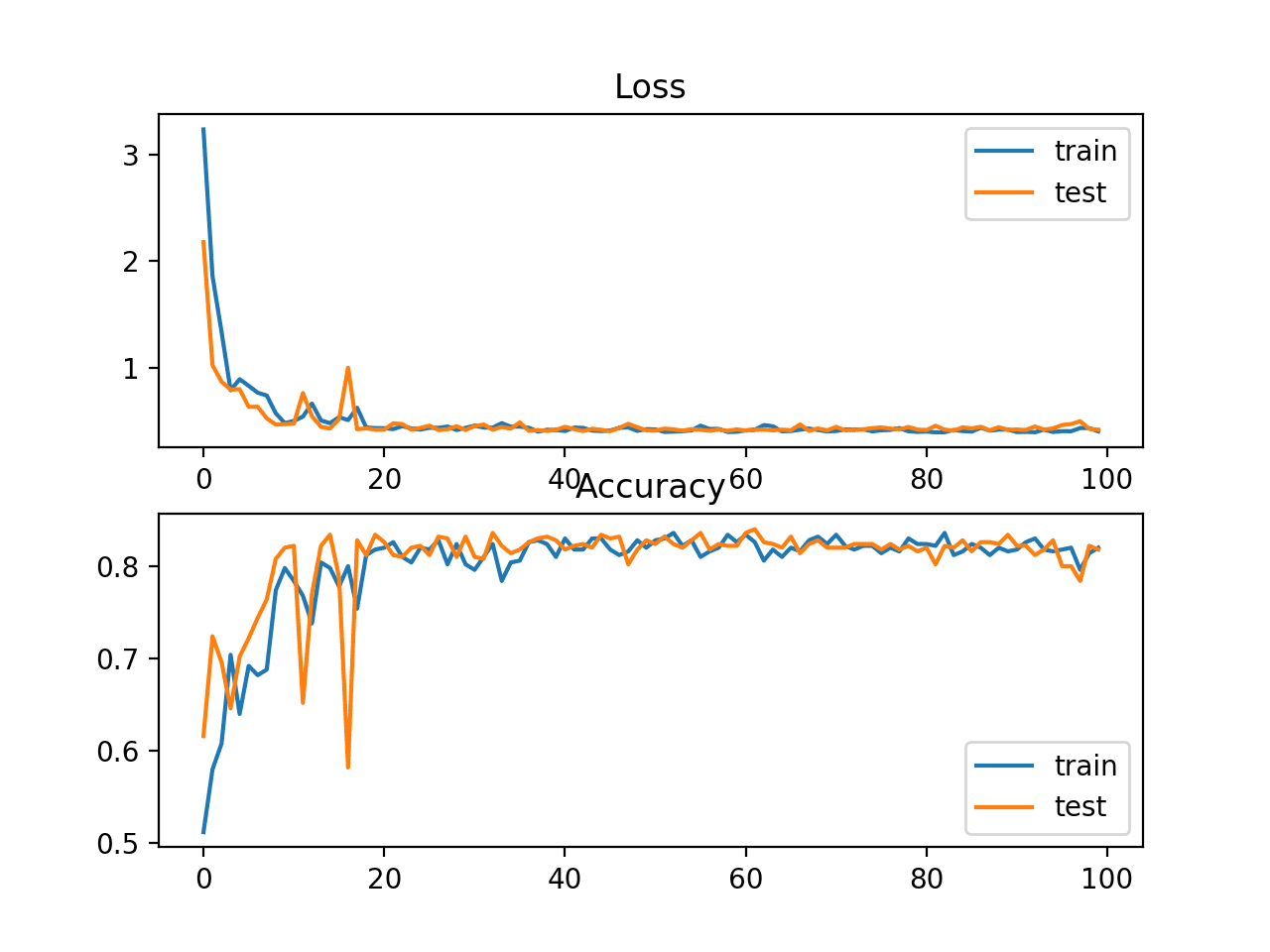

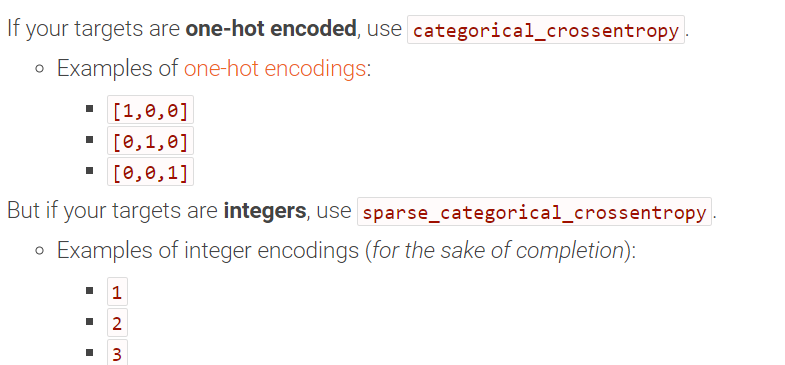

A dense layer does deep learning by calculating the dot product of input and learned weights using the formula In this model, the first input feature tensor of 28 x 28 x 1 is flattened to 1 x 784 tensor. Test_loss, test_acc = mlp_model.evaluate(test_images, test_labels, verbose=0)s Mlp_model.fit(train_images, train_labels, epochs=5, batch_size=64, verbose=1) The figure below shows the sample code our developer has crafted. The developer’s first attempt would be to model this problem using Keras dense layer. The image size is 28 x 28 with a single channel (monochrome images) MNIST is a database of handwritten digits with a training set of 60,000 examples and a test set of 10,000 examples. In our hypothetical scenario, a developer is tasked to classify handwritten images of the well-known MNIST dataset distributed with Scikit-Learn. Example - Image Recognition with TensorFlow and Keras The API it provides is at a higher level than the Keras layers and connections model. Essentially AutoKeras provides automation to the ML development process. If testing does not yield favorable results for the business, the model is discarded, and this cycle repeats.ĪutoKeras () tremendously reduces the manual trial and error repeat cycles by conducting all experiments and coming up with the proper model selections and correct Kera layer architecture for the model.ĪutoKeras can experiment with structured data, Image or text classification and regression jobs, time series forecasting, and multi-model jobs. Once a suitable model is selected, it is trained until further training only increases the loss in accuracy. This trial and error process includes selecting a suitable model for the problem at hand, fine-tuning all hyperparameters, and selecting the right data model via feature selection.

The figure below shows the overall trial-and-error process for deep learning-based models. Creating neural networks for regression, classification, or image recognition is a trial-and-error process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed